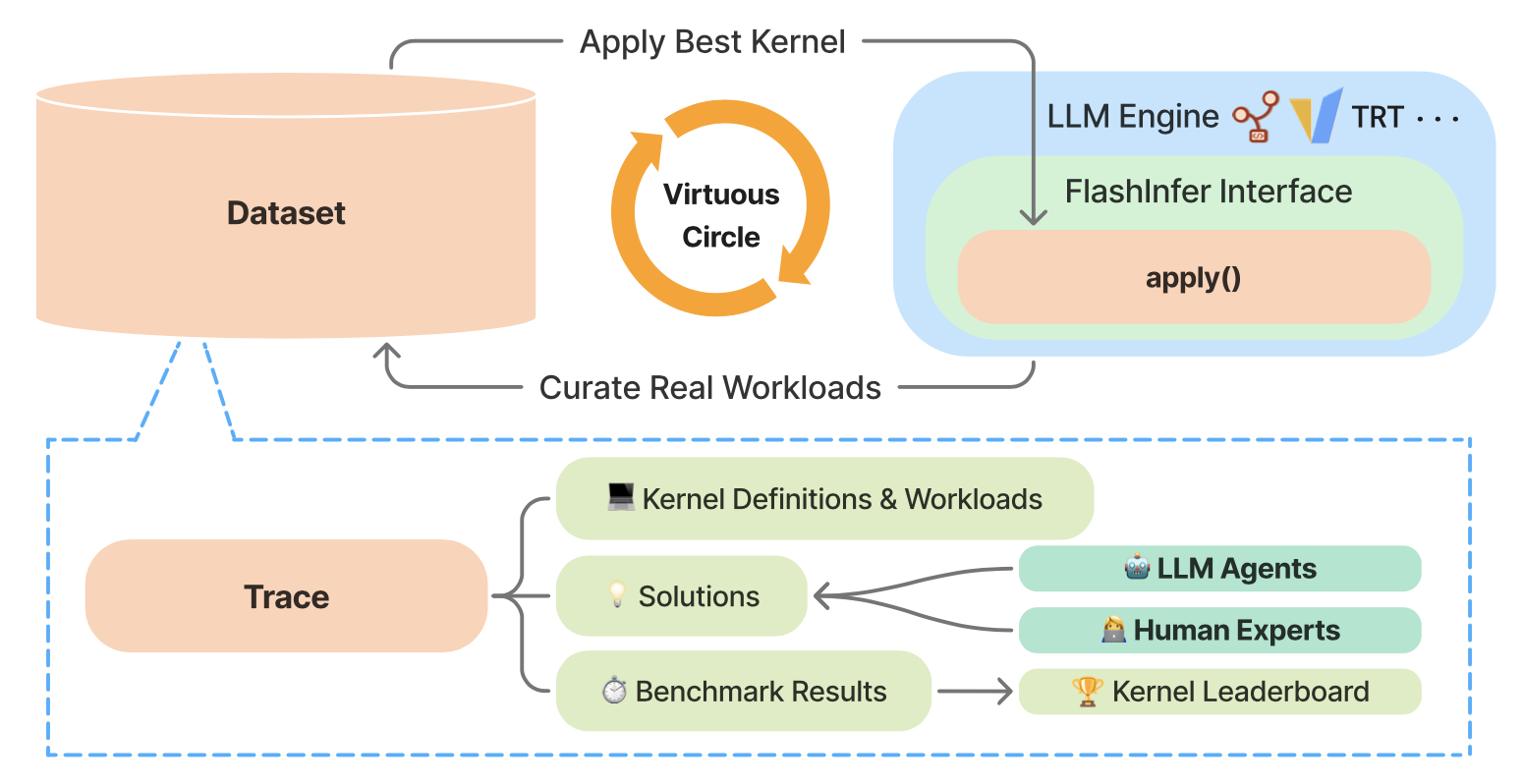

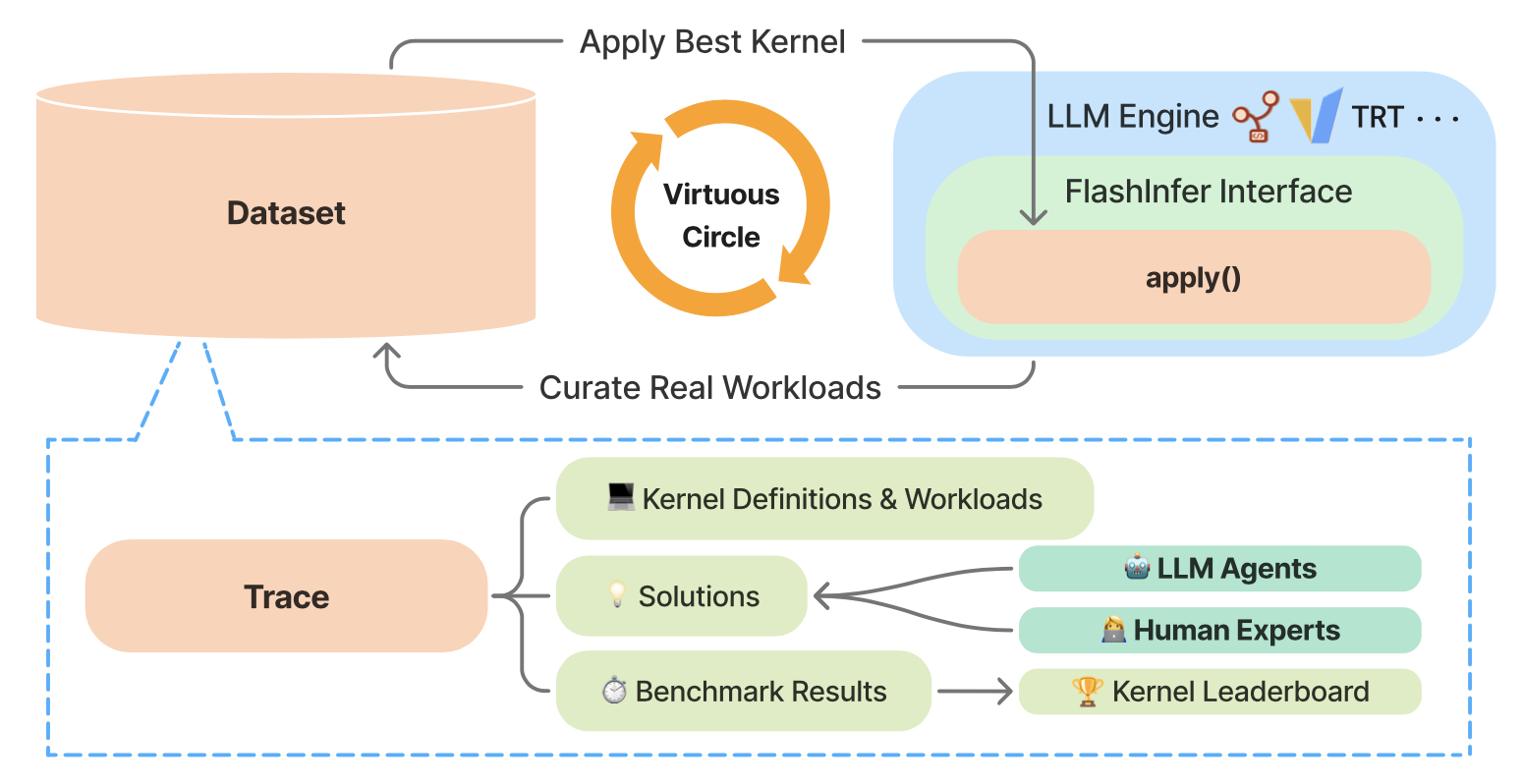

FlashInfer-Bench: Building the Virtuous Cycle for AI-driven LLM Systems

A standardized, closed-loop framework that connects kernel generation, benchmarking, and deployment

A standardized, closed-loop framework that connects kernel generation, benchmarking, and deployment

Routing algorithm for real-world LLM services—balancing TTFT SLOs and cost via selective API offload.

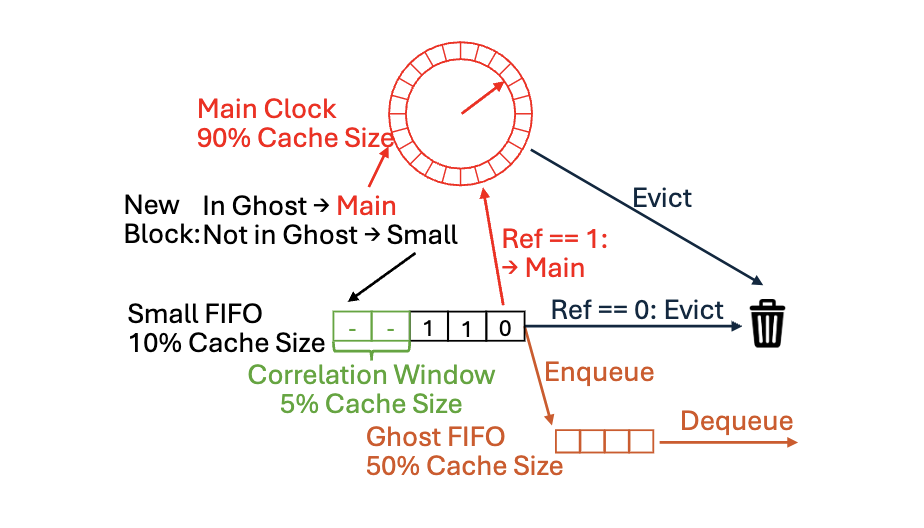

Production-oriented cache replacement algorithm for VMware vSAN.

A middle-layer API that bridges local web agents with the browser environment; Overleaf & Google Workspace integrations.

Published in Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023

This paper introduces a novel approach for identifying the possible large language models (LLMs) involved in text generation. Instead of adding an additional classification layer to a base LM, we reframe the classification task as a next-token prediction task and directly fine-tune the base LM to perform it. We utilize the Text-to-Text Transfer Transformer (T5) model as the backbone for our experiments. We compared our approach to the more direct approach of utilizing hidden states for classification. Evaluation shows the exceptional performance of our method in the text classification task, highlighting its simplicity and efficiency. Furthermore, interpretability studies on the features extracted by our model reveal its ability to differentiate distinctive writing styles among various LLMs even in the absence of an explicit classifier. We also collected a dataset named OpenLLMText, containing approximately 340k text samples from human and LLMs, including GPT3.5, PaLM, LLaMA, and GPT2.

Published in arXiv, 2024

We introduce WebLLM, an open-source JavaScript framework that enables high-performance LLM inference entirely within web browsers. WebLLM provides an OpenAI-style API for seamless integration into web applications, and leverages WebGPU for efficient local GPU acceleration and WebAssembly for performant CPU computation. With machine learning compilers MLC-LLM and Apache TVM, WebLLM leverages optimized WebGPU kernels, overcoming the absence of performant WebGPU kernel libraries.

Published:

This is a description of your talk, which is a markdown files that can be all markdown-ified like any other post. Yay markdown!

Published:

This is a description of your conference proceedings talk, note the different field in type. You can put anything in this field.

Undergraduate level course, Carnegie Mellon University, Department of Mathematical Sciences, 2023

Matrices and Linear Transformations

Graduate level course, Carnegie Mellon University, Machine Learning Department, 2024

Machine Learning with Large Datasets